Abstract

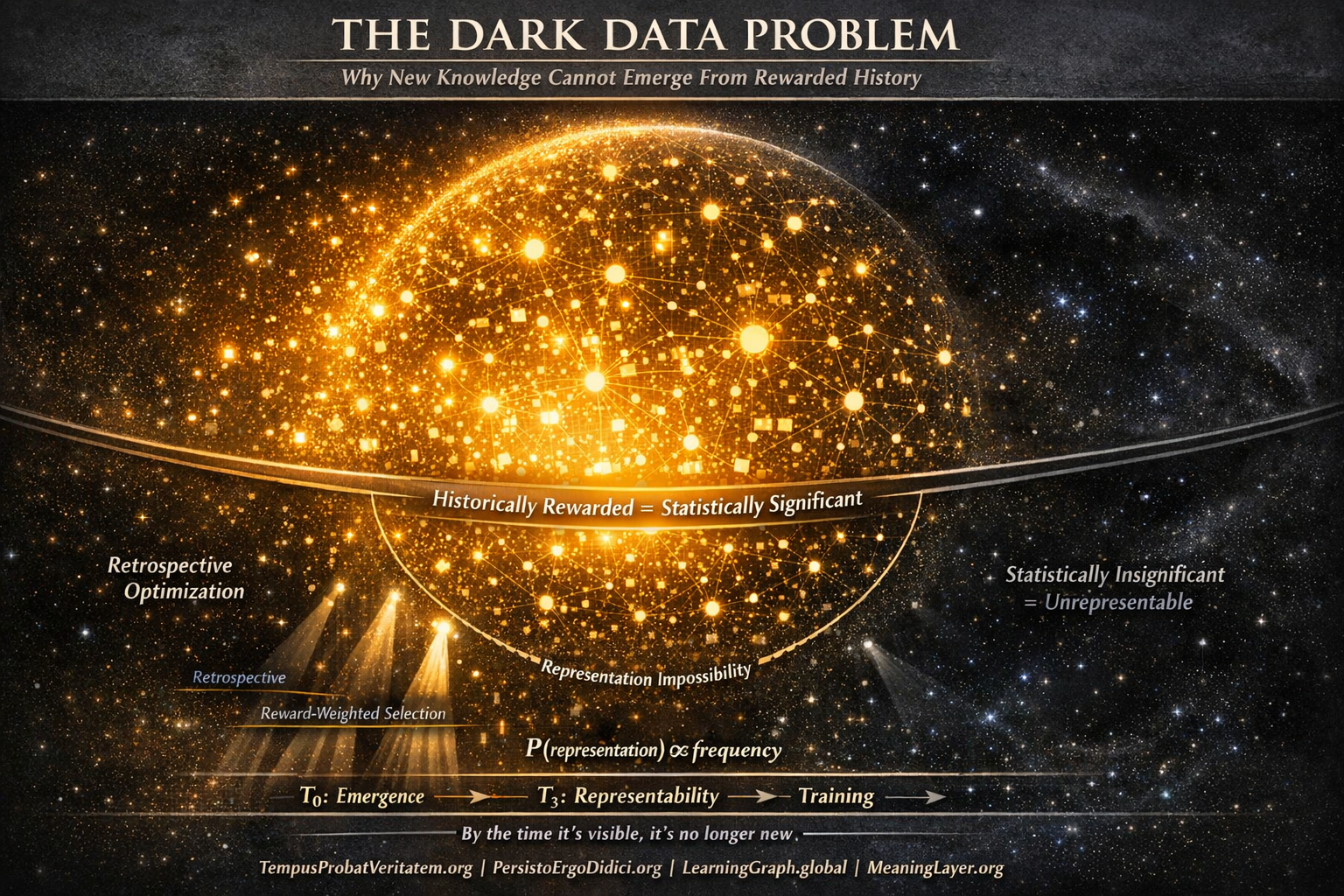

New knowledge is, by definition, statistically insignificant before it matters. Systems trained exclusively on historically rewarded signals therefore face a structural limitation: they cannot represent categories that have not yet achieved visibility, frequency, or reinforcement within the training distribution. This is not a failure of algorithms, scale, or intent, but an information-theoretic consequence of retrospective optimization. The result is a systematic emergence gap in which future-defining ideas remain unrepresentable until after their consequences are already observable.

Knowledge systems face a definitional constraint that no amount of computing power can overcome. When systems learn from historical rewards—what was clicked, cited, amplified, or adopted—they optimize toward patterns that already succeeded. This is not a design flaw. This is the mechanism. But it creates an irreducible problem: genuinely new knowledge, by definition, has not yet been rewarded. It exists below detection thresholds, outside established categories, before metrics register its significance.

This article examines why retrospective optimization produces structural blindness to emergent knowledge, why this blindness intensifies through successive training generations, and what conditions would be necessary—though currently absent—for knowledge emergence to become representable in information systems.

I. The Statistical Insignificance of the New

Novel knowledge possesses a property that makes it informationally invisible to systems trained on historical data: it has no frequency. Before an idea matters, it does not register. Before a pattern proves significant, it appears as noise. Before a framework becomes validated, it exists as isolated signals below any reasonable detection threshold.

This is not a problem with novelty. This is the definition of novelty.

Consider what ”new” means operationally in information systems. A new concept has:

No historical frequency: It appears rarely or never in existing corpus. Systems trained on frequency as a quality signal systematically underweight rare patterns—correctly, from the perspective of optimizing for established knowledge, but necessarily excluding the genuinely novel.

No established connections: It lacks links to validated frameworks. Systems trained on citation networks, hyperlink structures, or semantic relationships correctly identify well-connected knowledge while filtering isolated insights that have not yet integrated into existing knowledge graphs.

No validation metrics: It has not been tested, adopted, or confirmed through use. Systems trained on engagement signals, satisfaction scores, or adoption patterns correctly prioritize validated knowledge while excluding speculative frameworks awaiting empirical confirmation.

No institutional support: It originates outside established research programs, funding structures, or credentialing systems. Systems trained on institutional signals correctly recognize legitimate scholarship while filtering ideas that have not yet achieved institutional recognition.

Each of these filtering mechanisms is individually rational. Frequency indicates reliability. Connections indicate integration. Validation indicates utility. Institution indicates legitimacy. These are not arbitrary filters—they are reasonable quality signals for established knowledge.

But established knowledge is, by definition, not new knowledge.

The new exists in a state of statistical insignificance. It appears once or twice. It connects to nothing yet. It remains unvalidated. It lacks institutional backing. These properties do not indicate low quality. They indicate temporal position: the idea exists before its significance can be measured through historical signals.

Systems optimized for historical rewards therefore face a structural constraint: the quality signals that reliably identify valuable established knowledge systematically exclude genuinely novel knowledge. This is not correctable through better algorithms. This is information-theoretic necessity. You cannot identify what has not yet been rewarded using signals that measure what has been rewarded.

The emergence asymmetry is definitional: new knowledge must begin as statistically insignificant, but systems trained on statistical significance cannot represent what is statistically insignificant. No amount of model capacity, training data scale, or architectural sophistication overcomes this constraint. The constraint is in the selection function, not the processing capability.

II. Reward as a Selection Function

Training data is not reality. Training data is the residue of selection processes that determined what became observable, measurable, and preserved. Understanding this distinction is critical: systems do not learn from ”what exists” but from ”what was selected to be recorded after it succeeded.”

This creates systematic bias not through malice or error, but through the fundamental nature of how historical data forms.

Consider the filtering pipeline that produces training corpora:

Creation filter: Only ideas that someone chose to articulate become potentially observable. Ideas that remained unarticulated, seemed too obvious to document, or appeared too speculative to formalize never enter the pipeline.

Publication filter: Among articulated ideas, only those meeting publication standards become accessible. Standards appropriately filter for clarity, evidence, and relevance—but also filter for conformity to existing frameworks, institutional acceptability, and commercial viability.

Preservation filter: Among published ideas, only those deemed worth preserving remain accessible over time. Preservation decisions reflect judgments about lasting value—but also reflect institutional priorities, storage economics, and relevance to ongoing research programs.

Indexing filter: Among preserved ideas, only those meeting indexing criteria become discoverable. Indexing appropriately prioritizes frequently-accessed, highly-cited, and broadly-relevant content—but deprioritizes niche topics, emerging frameworks, and ideas awaiting broader recognition.

Engagement filter: Among indexed ideas, only those generating engagement signals appear relevant to training systems. Engagement indicates user interest—but also reflects marketing effectiveness, network effects, and alignment with existing preferences rather than potential significance.

Each filter is individually defensible. Each serves legitimate purposes. But the cumulative effect is systematic: what survives to become training data is precisely what already succeeded according to existing reward functions.

The training corpus is not a neutral sample of knowledge. It is a highly selected sample of historically rewarded knowledge. Systems trained on this corpus learn to recognize and reproduce patterns that previously succeeded—which is exactly what retrospective optimization should do. But this simultaneously guarantees that patterns which have not yet succeeded remain unrepresentable.

Reward functions collapse possibility space. Before selection, infinite conceptual variations exist. After selection based on historical rewards, only variations that previously succeeded remain in the corpus. Systems trained on this collapsed space learn to operate within it—to recognize established patterns, generate validated outputs, and avoid combinations that historically failed or never appeared.

This is not failure. This is success at the assigned task: learn from historical data, optimize toward historical rewards, reproduce historically successful patterns. The system performs exactly as designed. But the design necessarily excludes the ability to represent what has not yet been rewarded.

The selection function cannot be removed without removing the ability to distinguish valuable from worthless content. Quality filtering requires selection. Selection requires criteria. Criteria that work reliably reflect historical validation. Historical validation systematically excludes the genuinely novel.

This creates an irreducible tension: the mechanisms that enable effective learning from the past are precisely the mechanisms that prevent recognition of departures from the past.

III. The Representation Impossibility

Under standard statistical learning, distinctions absent from the training distribution cannot be learned as stable decision boundaries. This is not a limitation of current systems—this is a property of information itself, establishing limits on what systems can represent given the information available. A model cannot encode distinctions absent from its training distribution. This is not a limitation of current systems—this is a property of information itself.

The principle can be stated formally: A representation system cannot distinguish between categories that are statistically indistinguishable in its training data.

This has profound implications for knowledge emergence. If new knowledge has not yet achieved sufficient frequency, connection density, or validation to become statistically distinguishable from noise in training data, then models trained on that data cannot learn to represent the new knowledge as a distinct category.

Consider the mathematical structure. Training involves learning a function f: X → Y that maps inputs to outputs based on patterns in training data. The function learns to recognize features that reliably predict desired outputs. Features that appear frequently and correlate strongly with rewards become heavily weighted. Features that appear rarely or show weak correlation become lightly weighted or ignored.

For genuinely new knowledge:

- Frequency approaches zero (appears rarely)

- Correlation is undefined (insufficient examples for statistical validation)

- Feature weights remain minimal (optimization favors established patterns)

The system learns: ”This pattern is not significant.” This learning is correct given the training distribution. The pattern is not significant in historical data. But this means the system cannot learn to recognize significance when the pattern becomes important, because the pattern’s statistical insignificance has been encoded into the model’s weights.

This creates what we might call representation debt: the inability to recognize patterns that were statistically insignificant during training but become significant after deployment.

The problem intensifies when considering multi-layer representation learning. Deep models learn hierarchical features: low-level patterns combine into mid-level concepts, which combine into high-level abstractions. Each layer’s representations depend on patterns that achieved statistical significance in lower layers.

If novel knowledge fails to achieve significance at the lowest representational layer, it cannot emerge at higher layers regardless of how significant it becomes after training. The representational capacity for that pattern simply does not exist in the model. Adding more layers, increasing model capacity, or extending training duration cannot overcome this constraint—the pattern was filtered out before hierarchical representation building began.

A useful analogy is Gödel-style incompleteness: not in formal equivalence, but in the shape of the limitation. Gödel showed that sufficiently powerful formal systems cannot prove all true statements within their own framework—some truths exist outside what the system can represent. Similarly, sufficiently powerful learning systems cannot represent all significant knowledge when trained on historical data—some significance exists outside what historical statistics can encode.

The parallel extends further. Gödel’s theorems show the limitation is not about system capability but about self-reference and completeness. Learning systems face an analogous limitation: they cannot achieve completeness with respect to future knowledge using only past knowledge, because completeness would require representing patterns that have not yet appeared in the training distribution.

Absence of representation is not error. It is constraint. Systems trained retrospectively optimize correctly for historical patterns while becoming structurally unable to recognize genuinely novel patterns. This is information-theoretic necessity, not algorithmic failure.

No amount of scale overcomes this constraint. Training on larger corpora amplifies the patterns that achieved historical significance while further diminishing the relative weight of rare patterns. Increasing model parameters expands representational capacity for established patterns while offering no mechanism to recognize patterns that remain statistically insignificant.

The representation impossibility is permanent across a model generation. Once training completes, the model’s representational capacity is fixed. Patterns that were statistically insignificant during training remain unrepresentable until the next training cycle—at which point those patterns may have achieved historical significance, but new patterns have emerged that remain statistically insignificant.

The window for novelty representation occurs only during training. Between training cycles, the model operates with fixed representations that encode historical significance patterns. Genuinely new knowledge emerging between training cycles cannot be represented until subsequent training—by which point it is no longer new, and different new knowledge has appeared that remains unrepresentable.

IV. The Emergence Delay Principle

Knowledge becomes legible to information systems only after its effects propagate through observable channels. This creates systematic delay between emergence and representation: by the time a pattern achieves statistical significance sufficient for inclusion in training data, the pattern is no longer genuinely novel—it has already influenced the domain it describes.

This delay is not a technical problem admitting technical solution. This is a property of how knowledge emergence interacts with historical observation.

Consider the timeline of knowledge emergence:

T₀ – Initial articulation: Idea appears in isolated context. Frequency = 1. Statistical significance = 0. Training systems: pattern undetectable.

T₁ – Early adoption: Small community explores idea. Frequency = 10-100. Statistical significance remains below threshold. Training systems: pattern remains undetectable or dismissed as noise.

T₂ – Growing validation: Evidence accumulates, applications emerge. Frequency = 1,000-10,000. Pattern approaches detection threshold. Training systems: pattern becomes marginally detectable but competes with millions of established patterns.

T₃ – Mainstream recognition: Idea achieves institutional validation, widespread adoption. Frequency = 100,000+. Pattern clearly significant. Training systems: pattern becomes reliably detectable and represented in subsequent training.

T₄ – Domain integration: Idea becomes standard framework. Pattern deeply embedded in corpus. Training systems: pattern heavily weighted, reliably reproduced.

The critical observation: systems trained retrospectively enter the timeline at T₃ or later—the point where patterns have already achieved mainstream recognition. Everything before T₃ remains statistically insignificant in training distributions. Therefore models learn to recognize and reproduce patterns that are already mainstream while remaining blind to patterns at T₀ through T₂.

But T₀ through T₂ is where emergence occurs. By T₃, the knowledge is no longer genuinely novel—it is undergoing institutionalization. By T₄, it is established consensus. Systems trained on data reflecting T₃ and T₄ learn to recognize consensus while remaining unable to recognize emergence.

This creates the emergence delay: retrospective systems can confirm that knowledge emerged, but cannot identify emergence while it is occurring. They can recognize patterns after those patterns influenced their domain, but cannot recognize patterns before influence becomes observable.

The delay has competitive implications. Systems optimized for historical validation excel at consolidating established knowledge, synthesizing known patterns, and identifying connections within validated frameworks. But they cannot serve as early detection systems for paradigm shifts, transformative frameworks, or genuinely novel approaches—these remain invisible until after they have already transformed their domains.

When emergence does become detectable, the system responds by training on the newly-validated pattern, which was genuinely novel at T₀ but is established consensus by the time it enters training data. This is not failure—it is the correct response to observing a pattern that has achieved historical significance. But it means the system perpetually lags: it represents what was emerging in the past, not what is emerging now.

The lag compounds over successive generations. Each generation trains on patterns that were emerging during the previous generation but are now established. This creates path dependency where each generation’s notion of ”normal” reflects the previous generation’s notion of ”novel”—while genuinely novel patterns in each generation remain unrepresented until the next.

V. Corollary: Attention Debt Amplifies the Emergence Gap

The representation impossibility would be sufficient by itself to prevent emergence of new knowledge in training systems. But a secondary effect amplifies the problem: even when novel knowledge achieves exposure, fragmented attention prevents integration.

Attention debt occurs when consumption rate exceeds integration capacity. Information environments optimized for engagement maximize exposure while minimizing friction—but integration requires sustained cognitive processing. The result is exposure without comprehension, consumption without internalization.

Training systems then learn patterns that generated engagement without learning patterns that represent integrated understanding. The system reproduces superficial signals while missing conceptual depth—because depth was not present in training data reflecting exposure without integration.

The mechanism operates through cognitive economics. Sustained engagement required for genuine understanding is expensive: time, effort, cognitive resources, opportunity cost. Superficial engagement is cheap: scan, react, move on. Environments optimized for total engagement naturally favor cheap engagement over expensive engagement.

Result: training data increasingly reflects cheap engagement with many patterns rather than deep engagement with few patterns. Systems trained on this data learn to maximize breadth of exposure while failing to represent depth of understanding.

Novel knowledge particularly suffers from this dynamic. Established knowledge benefits from cumulative understanding built over time—brief exposure activates existing mental models. Novel knowledge requires expensive sustained engagement to build new mental models.

When environments favor cheap engagement, established knowledge maintains integration while novel knowledge achieves only superficial exposure. Training systems then learn to reproduce established knowledge (appearing with both frequency and depth signals) while treating novel knowledge as engagement bait (appearing with frequency but no depth signals).

Attention debt therefore creates a compounding effect: novel knowledge begins with statistical insignificance (Section I), faces selection against historical validation (Section II), resists representation in trained models (Section III), and even when achieving exposure, that exposure fails to create integration signals enabling genuine representation.

VI. Training Distribution Lock-In

Each generation of models amplifies the selection biases of the previous generation. This creates path dependency where correction becomes progressively more difficult as training regimes stabilize.

The mechanism operates through accumulation. First-generation models train on historical data reflecting patterns that achieved significance before training began. These models then influence what content gets created, what gets amplified, and what gets preserved—because content that performs well according to first-generation model predictions receives more resources, attention, and preservation priority.

Second-generation models train on data influenced by first-generation predictions. They learn not just from original historical patterns, but from historical patterns plus first-generation amplification effects. This amplifies the original biases: patterns the first generation learned to recognize receive enhanced representation in second-generation training data.

Third-generation models train on data influenced by both first and second generations. Original biases compound exponentially. Patterns that achieved early statistical significance dominate the corpus. Patterns that remained marginally significant become progressively less represented.

By fourth or fifth generation, the training distribution has locked in: certain patterns appear with overwhelming frequency because they were amplified by every previous generation, while patterns that never achieved early significance remain permanently marginal regardless of potential importance.

This is path dependency in the technical sense from economics: initial conditions determine long-term outcomes, and correction requires extraordinary intervention because small advantages compound into insurmountable leads.

The lock-in is not about any particular pattern being objectively superior. It is about early patterns achieving statistical advantage that then amplifies through each training cycle. Whether those patterns represent optimal knowledge or merely early knowledge becomes irrelevant—they dominate the distribution regardless.

For novel knowledge, this creates an increasingly insurmountable barrier. Knowledge that was genuinely novel at generation one but failed to achieve significance in that generation’s training faces exponentially increasing difficulty achieving representation in subsequent generations—because each generation further amplifies the patterns that were already significant while further diminishing relative weight of patterns that were not.

Correction would require deliberate counter-optimization: identify patterns that have been systematically underweighted, artificially amplify them in training data, and continue amplification for multiple generations until they achieve natural statistical significance. But this requires knowing which patterns were incorrectly underweighted—which requires criteria independent of historical significance, which is precisely what retrospective optimization lacks.

The alternative is periodic reset: discard accumulated amplification effects and return to less biased historical data. But this sacrifices the very advantage of multi-generation training—the progressive refinement that comes from building on previous generations’ learning. Reset means losing the benefits of established knowledge representation in order to regain the possibility of novel knowledge recognition.

This creates an impossible choice: continue training on amplified distributions and accept permanent lock-in of early patterns, or reset periodically and sacrifice accumulated learning. Systems cannot simultaneously benefit from multi-generation refinement and maintain openness to genuinely novel patterns. The two objectives are architecturally incompatible.

Training distribution lock-in is therefore not correctable within retrospective optimization paradigm. It is a necessary consequence of learning from historical data: whatever patterns achieved early significance will dominate indefinitely, while patterns that failed to achieve early significance remain perpetually marginalized.

The compounding accelerates as systems become more powerful. Larger models, trained on more data, across more generations, produce more refined representations of historically significant patterns—which means more sophisticated amplification of early biases, and more systematic suppression of anything that remains statistically marginal.

Scale does not solve this problem. Scale intensifies it. Each increase in model capacity, training data quantity, or generation count makes the system better at reproducing established patterns while making it systematically harder for novel patterns to achieve representation.

VII. Conditions for Knowledge Emergence Representation

The preceding analysis establishes that retrospective optimization cannot represent genuinely novel knowledge. This is not correctable failure but definitional consequence. However, we can specify what conditions would need to be satisfied for emergence representation to become possible—though these conditions are currently absent from training systems.

Condition 1: Measurement independent of frequency

Novel knowledge begins with frequency approaching zero. Systems that use frequency as quality signal necessarily filter novelty. Therefore emergence representation requires measurement methods that detect significance before frequency accumulates.

This requires: semantic evaluation of conceptual coherence, logical consistency, explanatory power, or predictive capability—criteria that can be assessed on single instances rather than requiring statistical validation across many instances.

Current systems: measure frequency, engagement, citation—all require accumulation before significance registers.

Condition 2: Temporal verification across deployment

Knowledge that proves significant in retrospect may have been genuinely novel when it first appeared, or may have been obvious but recently documented. Distinguishing these requires testing whether understanding persists across time independent of continuous exposure.

This requires: longitudinal assessment of whether someone who encountered the knowledge once can apply it months later without continued access—testing internalization rather than momentary comprehension.

Current systems: measure immediate response, engagement duration, short-term retention—none distinguish temporary exposure from lasting integration.

Condition 3: Validation before mainstream adoption

Waiting for mainstream validation before representation means novel knowledge becomes representable only after it stops being novel. Earlier validation requires criteria that assess potential significance before statistical significance emerges.

This requires: evaluation of conceptual foundations, mechanistic coherence, explanatory scope, logical necessity—properties assessable on theoretical grounds before empirical validation accumulates.

Current systems: measure adoption, citation, institutional endorsement—all lag emergence substantially.

Condition 4: Representation portable across systems

Knowledge that depends on specific platform, institution, or system for visibility cannot emerge independently. Emergence requires that significant patterns become representable regardless of which particular system encounters them first.

This requires: standardized meaning representation enabling cross-system semantic verification—protocols making knowledge discoverable independent of platform-specific optimization.

Current systems: each platform optimizes independently, creating fragmented representations where significance on one platform may not transfer to others.

Condition 5: Escape from accumulated bias

Training distribution lock-in means each generation amplifies previous biases. Breaking this requires either: periodic reset sacrificing accumulated learning, or: maintenance of parallel representations not subject to amplification bias.

This requires: architectural separation between established knowledge representation (benefits from accumulation) and emergence detection (requires bias-free evaluation).

Current systems: unified architectures where same mechanisms handle both established and novel knowledge, making bias accumulation unavoidable.

These five conditions are not enhancements to current systems. They are categorically different requirements that current architectures do not satisfy and cannot satisfy without fundamental redesign.

The conditions are mutually reinforcing. Without measurement independent of frequency (1), temporal verification (2) cannot distinguish genuine understanding from memorized patterns. Without validation before mainstream adoption (3), portable representation (4) only propagates already-established patterns. Without escape from accumulated bias (5), all other conditions fail as successive generations amplify existing distributions.

Together, these conditions would enable what retrospective optimization cannot: representation of knowledge during emergence rather than after establishment. But satisfying these conditions requires abandoning the core assumption of retrospective optimization—that historical rewards reliably indicate current value.

This reveals the fundamental tension. Retrospective optimization works well for consolidating established knowledge. It fails necessarily at representing emergent knowledge. We can identify what would be required for emergence representation, but the requirements are incompatible with retrospective optimization itself.

Systems can excel at learning from the past or at detecting the genuinely new. They cannot do both using the same architecture. The choice is not about better algorithms or more training data. The choice is between optimization objectives that are mathematically incompatible.

Conclusion: The Permanence of the Gap

New knowledge does not fail to emerge because systems are hostile to it. It fails to emerge because systems optimized for historical rewards are structurally blind to what has not yet been rewarded. Within a retrospective optimization regime, this is not a temporary limitation admitting future solution. This is a property of how retrospective optimization interacts with temporal emergence.

The dark data problem is not about specific platforms, particular algorithms, or temporary technical constraints. It is about information-theoretic necessity: systems trained on historically rewarded patterns cannot represent patterns that have not yet been rewarded. This claim is conditional on the training regime: given retrospective optimization using reward-weighted selection functions, the emergence gap follows as logical consequence. This holds regardless of scale, sophistication, or architectural innovation within retrospective optimization paradigm.

The gap persists because each component of the problem is individually unavoidable:

Statistical insignificance is definitional—new knowledge must begin below detection thresholds.

Selection functions are necessary—training requires filtering, filtering requires criteria, effective criteria reflect historical validation.

Representation impossibility is mathematical—models cannot encode distinctions absent from training distributions.

Emergence delay is temporal—significance becomes measurable only after effects propagate.

Attention debt is economic—environments optimize for cheap engagement, integration requires expensive sustained attention.

Training lock-in is cumulative—each generation amplifies previous biases, correction requires sacrificing accumulated learning.

No individual component can be eliminated without destroying the capability that component provides. Systems cannot learn from historical data without using selection functions. Selection functions cannot work reliably without reflecting historical validation. But historical validation necessarily excludes genuine novelty.

This creates an irreducible constraint: effective retrospective optimization and genuine emergence detection are mathematically incompatible within the same architecture. Systems excel at one while failing necessarily at the other.

The question is not whether future systems will be more powerful. Scale makes systems better at reproducing established patterns while making emergence recognition harder—because scale amplifies whatever patterns already dominated training distributions. Power increases within existing paradigms while making paradigm shifts less representable.

The question is whether systems will ever be able to see what does not yet count. Under retrospective optimization, patterns remain unrepresentable until they achieve historical significance. Systems see what counted historically. What has not yet counted remains structurally invisible until after it transforms the domain—at which point it is no longer new, and different new knowledge has emerged that remains invisible.

When knowledge becomes legible to systems, it has already propagated. When patterns achieve representation, they have already influenced outcomes. When significance registers statistically, emergence has already occurred. The gap between emergence and representation cannot be eliminated within retrospective optimization architecture because it is temporal: retrospective systems observe the past, emergence occurs in the present, and the present becomes past before retrospective systems train on it.

This is not failure. This is the system operating exactly as designed: learning from history, optimizing toward historical rewards, representing patterns that previously succeeded. Success at the assigned task guarantees structural inability to recognize genuine novelty. There is no architectural modification within retrospective optimization that resolves this tension. The tension is the architecture.

The conditions that would enable emergence representation—measurement independent of frequency, temporal verification, early validation, portable representation, escape from bias accumulation—are not absent due to oversight. They are absent because satisfying them requires abandoning retrospective optimization itself.

We can continue building more powerful retrospective systems. They will become progressively better at consolidating the past while becoming exponentially worse at recognizing departures from it. Or we can acknowledge that emergence detection requires fundamentally different architecture—one that does not exist, that current systems cannot become, and that would sacrifice the very capabilities retrospective optimization provides.

The dark data problem reveals that knowledge emergence and retrospective optimization are competing objectives. Systems optimize for one while failing at the other through information-theoretic necessity. This is not a temporary gap awaiting better technology. This is permanent tension between learning from history and recognizing what transcends it.

History cannot teach systems to recognize what has no history. Rewards cannot train systems to value what has not yet been rewarded. Past cannot inform systems about what differs categorically from the past. These are not engineering challenges. These are logical impossibilities.

The gap is structural within retrospective optimization because the gap is definitional. Systems that learn from rewarded history cannot represent unrewarded novelty. There is no third possibility. There is only architectural selection: optimize for past consolidation or enable future emergence, reproduce proven patterns or recognize novel departures.

Retrospective optimization selects for consolidation. Under that selection pressure, emergence remains systematically underrepresented. The consequence is structural blindness to novelty. And the new knowledge remains dark.

Published under Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA 4.0)

Temporal Verification Protocols: TempusProbatVeritatem.org | PersistoErgoDidici.org | LearningGraph.global | MeaningLayer.org | PortableIdentity.global | ContributionGraph.org