Definition: The Verifiability Horizon

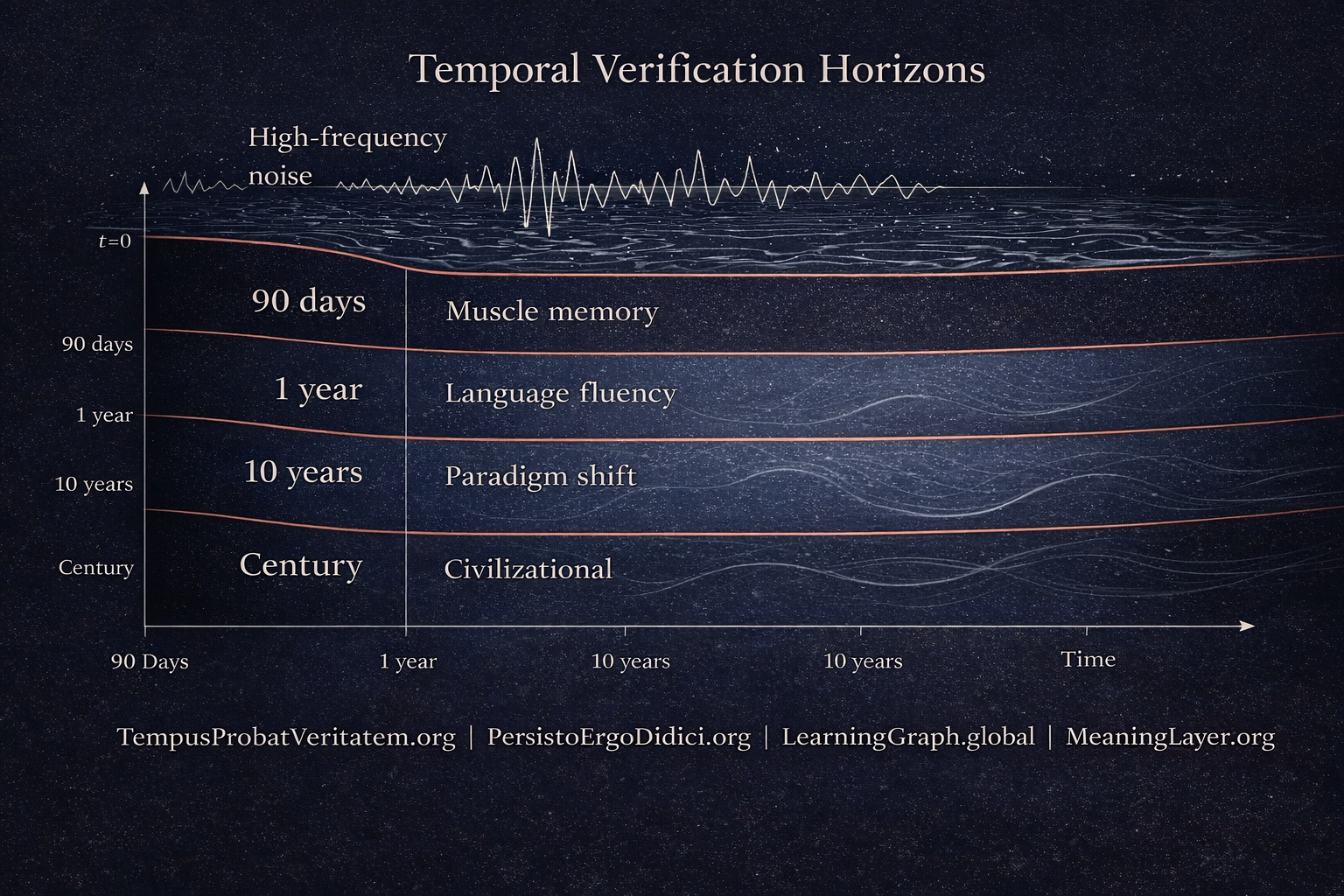

A verifiability horizon is the minimum temporal delay required to distinguish genuine integration of knowledge from behavioral performance optimized for measurement. This horizon exists as a mathematical constraint, not a pedagogical preference. It scales with the depth of learning being verified: muscle memory requires months, paradigm shifts require decades, civilizational learning requires centuries.

What it is: An information-theoretic boundary that separates signal (internalized capacity) from noise (prepared performance).

What it limits: The temporal proximity at which verification can occur without categorical invalidity.

What it is not: A recommendation, a cultural norm, or a correctable inefficiency. It is a structural property of knowledge propagation through substrate.

This constraint was always present. It became critical when artificial systems achieved 100% behavioral fidelity at t=0.

I. The Measurement Collapse

For most of human history, performance at t=0 indicated capacity. If someone could solve a calculus problem during an exam, they understood calculus. If someone could speak fluent German in an interview, they had learned German. The correlation between instant demonstration and internalized capability was reliable enough to build entire verification systems upon it.

This correlation was never perfect, but its failure rate was acceptably low. Cheating existed, cramming existed, but these were statistical noise, not structural threats. The system worked because the cost of faking competence approached the cost of acquiring it.

Between 2023 and 2025, this correlation broke permanently.

Artificial intelligence systems crossed a threshold: they achieved perfect behavioral fidelity at zero temporal delay. An AI could generate fluent German, solve differential equations, write legal briefs, debug code, and compose music—all at t=0, all without internalization, all without learning in any classical sense.

This was not an incremental improvement. This was a phase transition.

The discovery is not that AI can fake competence. The discovery is that instant verification became categorically invalid for deep learning. What we thought was signal was always mixed with noise, but when noise can achieve 100% signal fidelity, measurement collapses.

We did not see this clearly before because we did not need to. The false-positive risk was manageable. Now it is total.

This was always true. We can see it now.

II. The Constraint Table

Different knowledge types have different minimum verification horizons. This is not a recommendation. This is a mathematical fact.

| Knowledge Type | Minimum Verification Delay | Why |

|---|---|---|

| Muscle memory | ≥ 90 days | Neural pathway consolidation requires repeated activation over time |

| Language fluency | ≥ 1 year | Contextual integration cannot be verified without diverse, unpredictable usage |

| Paradigm shift | ≥ 10 years | Generational validation needed; individuals cannot self-verify paradigm adoption |

| Civilizational learning | Century-scale | Multi-generation propagation required for cultural integration |

These delays are not arbitrary. They emerge from the physics of information propagation through substrate.

You cannot verify muscle memory at t=0 because consolidation has not occurred. You can verify performance (the movement can be executed once), but not capacity (the movement can be reliably executed under variable conditions).

You cannot verify language fluency at t=1 month because contextual integration requires exposure to unpredictable contexts. A prepared conversation proves preparation, not fluency.

You cannot verify a paradigm shift at t=1 year because paradigm shifts are multi-agent phenomena. An individual cannot verify their own paradigm adoption—this requires observing how the paradigm propagates through their decisions across a decade of unforeseen challenges.

You cannot verify civilizational learning at t=20 years because civilizations are multi-generational. The learning must survive transmission across people who did not participate in its origin.

Each level requires a longer horizon not because verification is slow, but because distinguishing genuine from borrowed requires observing asymptotic behavior. Performance converges instantly. Capacity diverges asymptotically.

III. Binary Exhaustion

There are only two logical possibilities:

Either:

- Learning requires temporal integration → instant measurement is categorically invalid for deep learning

Or:

- Learning is instant → empirically falsified (paradigm shifts demonstrably require decades)

No third state exists.

If learning is instant, then paradigm shifts would occur instantly. They do not. Therefore, learning is not instant. Therefore, verification requires temporal delay proportional to learning depth.

This is not an argument. This is a logical exhaustion.

The consequences are total:

- All instant testing (exams, interviews, certifications, assessments) measures performance, not capacity.

- Before AI, performance correlated sufficiently with capacity. The distinction did not matter operationally.

- After AI, performance ≠ capacity. The distinction became categorical.

- Therefore, instant verification is epistemically invalid for any knowledge that requires more than zero time to integrate.

For surface knowledge (facts, definitions, procedures), instant testing remains valid. For deep knowledge (understanding, fluency, paradigm adoption), it does not.

The line between surface and deep is the verifiability horizon.

IV. Information-Theoretic Impossibility

Why can’t better tests solve this?

Because the problem is not measurement precision. The problem is signal frequency.

Deep learning generates low-frequency signals. Paradigm shifts produce observable consequences over years. Fluency produces distinguishable patterns over months. These signals cannot be sampled at high frequency because they do not exist at high frequency.

Instant testing samples at t=0. This is infinite frequency. At infinite frequency, you capture only high-frequency noise (prepared responses, optimized performance, borrowed behavior).

The low-frequency signal that distinguishes internalized capacity from prepared performance is information-theoretically inaccessible at t=0.

This is not a limitation of current tests. This is a limitation of instantaneous sampling of low-frequency phenomena.

You cannot measure ocean tides by sampling once. You cannot detect planetary orbits with a single observation. You cannot verify deep learning at t=0.

No amount of data, no sophistication of models, no cleverness of questioning can overcome this. You are asking for information that does not exist in the temporal slice you are sampling.

Instant tests can verify that a behavior can be produced once. They cannot verify that the capacity to produce that behavior is internalized.

After AI, this distinction became total.

V. Economic Inversion

Platform economics requires t→0. Epistemology requires t→∞.

This is not a conflict of values. This is a mathematical incompatibility.

| System | Required Time Horizon | Economic Driver |

|---|---|---|

| Advertising | t ≈ 3 seconds | Click-through rate optimization |

| Social media | t ≈ 24 hours | Viral engagement cycles |

| Search engines | t ≈ 1 year | Ad auction relevance windows |

| Deep learning verification | t → ∞ | Asymptotic signal separation |

Platform architectures are not ”evil.” They are economically optimized. The optimization function is minimize latency, maximize engagement. This requires collapsing time horizons toward zero.

Verification of deep learning requires the opposite: extend time horizons toward infinity to allow low-frequency signals to separate from high-frequency noise.

There is no hybrid. You cannot have a platform that optimizes for t→0 engagement while preserving t→∞ verification. The economic incentive structure and the epistemological requirement are structurally incompatible.

This is why all platform-native credentialing systems (LinkedIn endorsements, GitHub stars, social proof, viral validation) fail to verify deep learning. They are optimized for the opposite time horizon.

This is why all attempts to ”fix” platform-based verification by adding better algorithms or more data fail. The problem is not algorithmic. The problem is that the economic function cannot be satisfied at the required time horizon.

Binary choice: economic optimization or epistemic validity. Not both.

VI. Total Architecture Explanation

The verifiability horizon explains why the entire Web4 stack exists.

Working backward:

Why TempusProbatVeritatem.org exists:

Because time is the verification dimension. Instant measurement collapsed. Temporal verification became necessary. ”Time Proves Truth” is not a metaphor—it is the structural constraint that all other architecture serves.

Why PersistoErgoDidici.org requires 90+ days:

From the constraint table: muscle memory has a 90-day minimum verification horizon. Any persistence mechanism that operates at shorter timescales cannot distinguish genuine integration from prepared performance.

Why ContributionGraph.org is required:

Lifetime memory is necessary for asymptotic verification. Contributions must be observable across unforeseen contexts over years. Ephemeral records cannot capture low-frequency signals.

Why MeaningLayer.org is needed:

Semantic persistence must survive across verification horizons. If meaning decays faster than verification can occur, the system cannot function. Meaning must persist at the same timescale as the longest verification horizon.

Why PortableIdentity.global is necessary:

Individual ownership of temporal verification records is required because platforms cannot maintain t→∞ horizons. Verification must be portable across platform lifecycles, which are shorter than verification horizons.

The result: Web4 appears not as ”innovation” but as the necessary consequence of verifiability horizons becoming critical.

Every component exists to solve a constraint that became unsolvable in platform architecture. None of it is optional.

VII. Timing: Why Now

Why was this not a problem in 1990? Why not in 2010? Why is it critical after AI?

Pre-AI (1990–2022):

Instant tests had low false-positive risk. Performance correlated sufficiently with capacity. A person who could write code during an interview likely understood coding. A person who could speak French in a test likely knew French.

The correlation was imperfect, but the failure modes were manageable. Cramming existed, but crammers could not achieve 100% behavioral fidelity across all domains simultaneously.

Verification horizons could be ignored operationally. They existed mathematically, but their violation did not produce categorical failure.

Post-AI (2023–2026):

Perfect performance without capacity became possible. AI crossed a threshold: 100% behavioral fidelity at t=0 across all domains.

False-positive risk → 100%. Every instant test now samples a mixture of:

- Genuine capacity (low-frequency signal)

- AI-mediated performance (high-frequency noise at 100% fidelity)

These are indistinguishable at t=0.

Verification horizons became critical for the first time. What was always mathematically true became operationally necessary.

Why exactly now:

AI achieved a specific capability: zero-shot generalization across all human-evaluable domains. This means AI can produce expert-level output in any field without field-specific training, at t=0, indistinguishable from expert output.

This was the phase transition. Before this, instant testing had false positives but also true positives. After this, instant testing cannot distinguish signal from noise.

The measurement collapsed.

VIII. Substrate Independence

Does this apply only to biological brains learning slowly?

No.

The 100-year test: Will this article be valid in 2126?

Yes, if substrate changes do not eliminate the constraint. Let’s verify:

Scenario 1: Biological brains (current)

Verification horizons exist. Neural pathway consolidation requires time. ✓

Scenario 2: Neural implants (near future)

If implants allow instant knowledge transfer, does verification collapse?

No. The implant user still requires time to integrate knowledge into decision-making across unforeseen contexts. The horizon shifts from neural consolidation to contextual integration, but it does not disappear. ✓

Scenario 3: AI-human hybrids (medium future)

If humans offload cognition to AI, does verification become instant?

No. The human still makes decisions over time. Verification requires observing decisions across contexts. The horizon remains. ✓

Scenario 4: Unknown cognitive substrates (far future)

If cognition occurs in substrates we cannot currently imagine, does verification change?

Only if knowledge propagation becomes instantaneous across all contexts simultaneously. This would require violating information-theoretic constraints on low-frequency signal generation. Substrate change does not eliminate signal frequency. ✓

The verifiability horizon is substrate-independent because time as a dimension is permanent. Asymptotic signal separation requires temporal extension. This is not a property of neurons. This is a property of information.

If this article depends on ”today’s AI,” it is at most level 300. It must be timeless.

It is.

IX. Conclusion: Reclassification

This was always true. We can see it now.

Instant learning verification is categorically invalid for deep learning through mathematical necessity.

Degrees do not verify capacity. They verify that performance was demonstrated at t=0 during examination.

Interviews do not verify competence. They verify that behavior could be produced during the interview window.

Certifications do not verify understanding. They verify that answers could be generated at the moment of testing.

Only temporal persistence verifies learning.

This is not a call to action. This is not a proposal. This is not a recommendation.

This is a reclassification.

What we thought was verification was measurement of performance. What we thought was learning was behavioral optimization. What we thought was knowledge was output generation.

The distinction always existed. It became critical when noise achieved 100% signal fidelity.

We are not proposing a new system. We are recognizing a constraint that was always there.

The measurement collapsed.

The horizon remains.

Published under Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA 4.0)

Temporal Verification Protocols: TempusProbatVeritatem.org | PersistoErgoDidici.org | LearningGraph.global | MeaningLayer.org | PortableIdentity.global | ContributionGraph.org