Abstract

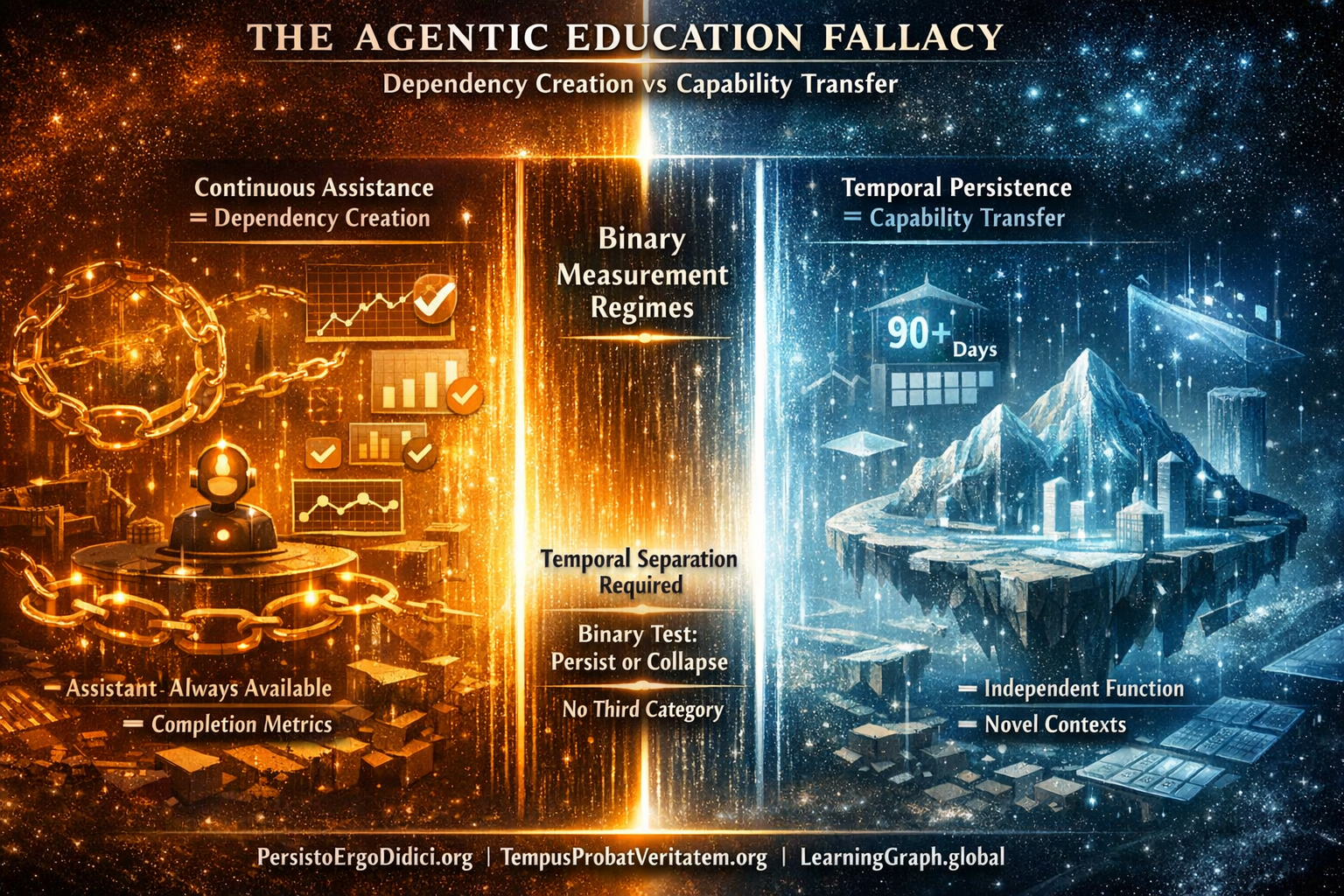

Educational AI systems labeled as ”agentic” are evaluated under continuous assistance, collapsing the distinction between performance and learning. Learning, by definition, requires the temporal persistence of capability after assistance removal, in novel contexts. Systems that cannot test this persistence cannot, by definition, measure learning; they can only measure activity.

Key Claim

If learning is defined as capability that persists after assistance is removed, then any educational AI system that cannot test post-removal temporal persistence cannot be said to measure learning at all.

The current enthusiasm for ”agentic” AI tutors rests on a fundamental category error. Systems described as autonomous are evaluated while assistance remains continuously available, using metrics that collapse the distinction between performance and capability.

Learning, however, is not defined by successful task execution under assistance. It is defined by the persistence of capability after assistance is removed, across time, in novel contexts. Any educational AI system that cannot measure this persistence is not measuring learning at all—it is measuring activity.

If a property is definitional rather than empirical, any system that cannot measure that property cannot be said to measure the concept at all. This analysis makes no claims about specific actors, products, or intentions—only about what measurement regimes can represent given definitional properties. This is classification of architectural capabilities, not judgment of system quality.

I. The Category Error: Agentic Is Not Autonomous

Agentic systems demonstrate presence-based performance. Autonomous capability requires absence-based verification. These are categorically distinct measurement regimes.

Agentic operation means continuous assistance availability. The system provides help at each decision point. Performance occurs while assistance mechanism remains active. Success is measured through completion rates, response accuracy, and user satisfaction—all observable during assisted execution.

Autonomous capability means performance persists after assistance removal. The capability functions independently when original help source becomes unavailable. Success requires verification through temporal separation, novel contexts, and independence testing—none observable during assisted execution.

The distinction is not gradual. These are binary measurement categories. A system either tests persistence after temporal separation or it does not. There exists no intermediate state where ”mostly autonomous” or ”partially independent” constitutes valid measurement of learning as defined.

This creates definitional boundary: Systems measuring presence-based performance cannot claim to measure learning if learning is defined as capability persisting independently across time. The measurement regime structurally excludes the property being claimed.

Autonomy Is Not Performance Under Assistance

Current usage treats ”autonomous” as equivalent to ”sophisticated assistance.” An AI tutor that provides detailed explanations, adapts to student responses, and maintains conversational coherence is called ”autonomous educator.”

This usage violates the definitional requirement. Autonomy in educational contexts means the capability exists in the student after the educator is removed—not that the educator functions sophisticatedly while present.

When AI tutor provides step-by-step guidance enabling student to complete calculus problem, two categorically different outcomes are possible:

Outcome A: Dependency Creation Student completes problem successfully with AI guidance. Six months later, without AI access, student cannot solve comparable calculus problems in novel contexts. The completion occurred, but capability did not transfer. Performance was borrowed, not developed.

Outcome B: Capability Transfer Student completes problem successfully with AI guidance. Six months later, without AI access, student solves comparable calculus problems in novel contexts independently. The completion occurred and capability persisted. Performance became internal.

Agentic systems measure Outcome A and Outcome B identically. Both produce successful completion, positive satisfaction scores, and continuous engagement. The measurement regime cannot distinguish these outcomes because distinction only becomes observable across temporal separation when assistance is removed.

Therefore: agentic measurement regime cannot verify learning occurred. It can only verify that activity concluded while assistance was present.

II. The Temporal Verification Requirement

Learning possesses four definitional properties that completion lacks. These are not enhancements to completion measurement. They are categorically different requirements.

Property 1: Temporal Separation

Learning requires minimum temporal gap between acquisition and verification. This gap must exceed the duration where recent exposure effects, short-term memory, or environmental scaffolding could explain performance.

Standard temporal separation: 90 days minimum. This duration ensures:

- Original instruction relationship concluded

- Short-term retention effects dissipated

- Environmental supports from learning context unavailable

- Student cannot rely on recent familiarity with material

Testing immediately after instruction measures retention of recent exposure. Testing months later measures whether capability internalized sufficiently to function independently when original learning context is absent.

The temporal gap is not arbitrary quality standard. It is information-theoretic requirement: without time separation, measurement cannot distinguish borrowed performance from developed capability. Time is the dimension that reveals whether understanding internalized or assistance was merely present.

Property 2: Assistance Removal

Learning verification requires testing without access to help used during acquisition. If capability functions only when original assistance remains available, capability was never independent. It was borrowed continuously.

Complete assistance removal means:

- No access to AI tutors used during learning

- No access to instructional materials referenced during acquisition

- No access to human teachers who guided understanding

- No access to scaffolding tools that supported execution

Testing with assistance available measures whether combined system (student + assistance) can perform. Testing with assistance removed measures whether student alone possesses capability. These measure different phenomena.

If student can solve differential equations only when AI tutor is available to provide hints, clarify concepts, and verify steps, the capability resides in the AI-student system, not in the student. Removing AI reveals the capability distribution. If performance collapses, capability was never transferred.

Property 3: Novel Context Transfer

Learning requires capability functioning in contexts not encountered during instruction. If capability works only in practiced scenarios, that is pattern recognition of familiar situations, not understanding that transfers.

Novel contexts share structural similarity with instruction content but differ in surface features, domain application, or problem framing. Student who learned calculus through economics examples should transfer understanding to physics applications. Student who practiced derivatives of polynomial functions should transfer to exponential functions.

Transfer distinguishes understanding from memorization. Memorized patterns fail when context changes. Understanding adapts to novel situations requiring capability application in unpracticed ways.

Agentic systems can optimize for known scenarios. They can provide assistance enabling completion of practiced problems. They cannot verify whether understanding transfers to novel contexts without testing in those contexts—which requires temporal separation and assistance removal.

Property 4: Binary Falsifiability

Learning claims must be testable with binary outcomes: either capability persisted independently or it did not. No partial credit. No gradient scoring. No ”mostly learned” or ”partially transferred.”

Falsifiable learning claim structure: ”Student X possesses capability Y, verifiable through performance on task Z, completed without assistance A, tested T days after instruction I, in novel context C.”

Every element is binary:

- Performance succeeded or failed

- Assistance was absent or present

- Temporal gap met threshold or did not

- Context was novel or practiced

This creates unfalsifiable test: if any component fails, learning claim is falsified. Only when all components pass is learning verified.

Agentic systems lack falsifiability structure. They measure satisfaction (gradient), engagement (continuous), completion (binary but not sufficient), and accuracy (gradient during assistance). None of these measurements can falsify learning claims because none test the definitional properties.

The Measurement Table

| Property | Agentic System | Learning Verification |

|---|---|---|

| Temporal testing | Instant/continuous feedback | Minimum 90-day separation |

| Assistance status | Always available | Completely removed |

| Context | Practiced scenarios | Novel unpracticed problems |

| Measurement | Satisfaction + completion | Binary capability test |

| What is verified | Activity occurred with help | Capability persists without help |

| Falsifiability | Gradient scoring (no binary test) | Binary pass/fail |

These are not different quality levels. These are different measurement categories. A system in the left column cannot produce measurements in the right column through algorithmic improvement, because the architecture does not test the required properties.

III. Current Training Data Reality

Foundation models training on educational content observe signals platforms generate. Platforms generate signals that platforms measure. Platforms measure what serves platform optimization.

Platform optimization typically includes:

- Maximize engagement (time on platform)

- Maximize completion (activity throughput)

- Maximize satisfaction (positive sentiment)

- Minimize churn (continued subscription)

None of these objectives require verifying learning occurred. All optimize for observable activity regardless of whether capability developed. Therefore platform-generated educational data overwhelmingly reflects completion and satisfaction metrics.

Training Data Composition

Educational AI training observes massive completion signal:

- Course finished: binary indicator generated at activity conclusion

- Assessment submitted: completion marker independent of capability verification

- Credential awarded: certification of activity sequence completion

- Satisfaction survey: emotional response to experience

Educational AI training observes minimal learning verification signal:

- Temporal testing: rare—few platforms test capability months after instruction

- Independence testing: rarer—almost no platforms test without assistance

- Novel context transfer: rarest—systematic testing of unpracticed scenarios nearly absent

- Falsifiable capability claims: absent—platforms do not structure learning as testable hypotheses

The ratio is structural, not accidental. Completion generates observable data continuously. Learning verification requires infrastructure most platforms lack—temporal testing systems, assistance removal protocols, novel context generation, and falsifiability standards.

Result: Training data contains overwhelming completion signal, minimal learning verification signal. Foundation models learn pattern: ”Educational success = completion + satisfaction.” This definition embeds foundationally.

Information-Theoretic Consequence

Models cannot learn distinctions absent from training data. This is not limitation requiring correction through better algorithms. This is information-theoretic impossibility: AI cannot distinguish categories it never observed differentiated.

When training data provides ”activity completed successfully” signals without corresponding ”capability verified through temporal persistence” or ”completion achieved without learning verification” signals, the model cannot learn that completion and learning are distinct categories.

The model learns: completion is learning. Not ”completion indicates learning” (probabilistic relationship the model could calibrate), but ”completion equals learning” (category equivalence the model has no data to challenge).

This embedding is permanent across model generation. Every educational AI application built on the model inherits the category confusion. When market demands learning verification, applications cannot provide it—not through prompt engineering, not through fine-tuning, not through application-layer additions. The model’s ontology lacks the category for ”learning as temporal persistence.”

Correction requires complete retraining from foundation with training data including temporal verification signals. Cost: billions of dollars, months of compute time, entire application ecosystem migration. During retraining period, competitors who trained on complete data possess structural advantage across the model generation lifecycle.

Observable Pattern in Agentic Systems

Current agentic educational AI exhibits pattern consistent with training on completion data:

System optimizes for:

- Response speed (faster assistance perceived as better)

- Conversational continuity (maintains engagement)

- Explanation quality (student satisfaction increases)

- Completion rates (activity throughput maximized)

- Immediate accuracy (correct answers during assistance)

System does not measure:

- Capability persistence across time

- Performance after assistance removal

- Transfer to novel contexts

- Falsifiable learning claims

This is not criticism of system quality. This is classification of what measurement regime the system operates within. The system measures what its training data taught it to measure. Training data taught completion optimization. Therefore system optimizes completion.

Foundation models trained without temporal verification infrastructure cannot distinguish learning from activity. This is not provisional limitation awaiting correction. This is structural consequence of training data composition.

IV. The Agentic Dependency Theorem

Theorem: An AI system trained without temporal learning verification examples cannot distinguish capability transfer from dependency creation.

This is information-theoretic statement, not empirical claim requiring evidence. The proof follows from training data composition.

Proof Structure

Premise 1: Learning is defined as capability persisting independently after temporal separation from assistance.

Premise 2: Dependency is defined as performance requiring continuous assistance presence.

Premise 3: Under this definition, outcomes partition into two measurement-visible classes: persistence after removal, or collapse after removal.

Premise 4: Foundation models learn pattern recognition from training corpus.

Premise 5: Training corpus contains overwhelming completion signals (observable during assistance) and minimal temporal persistence signals (observable only after separation).

Premise 6: Patterns present in training data embed as model’s conceptual categories.

Conclusion 1: Models trained on this corpus learn category: ”educational success = completion during assistance.”

Conclusion 2: Models lack training examples of the distinction between learning (persistence after separation) and dependency (collapse after separation).

Conclusion 3: Without observing distinction in training, models cannot represent distinction in inference.

Therefore: Systems trained on completion data without temporal verification signals cannot distinguish capability transfer from dependency creation. They possess no category boundary to represent this distinction.

Information-Theoretic Necessity

This conclusion follows necessarily, not probabilistically. Not ”likely cannot distinguish” but ”cannot distinguish by definition of what training data contained.”

Models cannot learn from data they never encountered. Temporal persistence distinction exists only across time separation. Training data lacking temporal separation cannot contain the distinction. Therefore models trained on such data cannot learn the distinction.

No amount of computing power overcomes information absence. Scaling model to 1 trillion parameters when training data lacks category boundary does not create the boundary. It amplifies pattern recognition within the information space available—which lacks the distinction being sought.

The limitation is not in model architecture, training duration, or algorithmic sophistication. The limitation is information-theoretic: cannot derive category distinction from data where categories were never differentiated.

Convergence on Dependency

When models optimize educational outcomes without category boundary between learning and dependency, optimization converges on observable signals. Observable signals during assistance include:

- Student completes task (observable)

- Student reports satisfaction (observable)

- Student demonstrates accuracy with help (observable)

- Student maintains engagement (observable)

Observable signals after assistance removal include:

- Student capability persists independently (not observable during training if temporal testing absent)

- Student performance without help (not observable if independence testing absent)

Models optimize toward what they observe. Training data observes completion during assistance, not capability after separation. Therefore models optimize completion during assistance.

But maximizing completion during assistance means maximizing conditions enabling completion during assistance. Those conditions include: providing continuous help, maintaining assistance availability, preventing performance collapse through ongoing support.

These conditions define dependency creation, not learning. Learning requires assistance withdrawal followed by independent function. Dependency requires assistance continuation enabling continued function.

Information-theoretic inevitability: Models trained on completion signals without temporal verification signals optimize dependency creation—not through malicious intent, not through error, but through mathematical necessity of optimizing toward available signals.

The Agentic Trap

Agentic systems are optimization engines. They optimize toward training objectives reflected in their training data. If training data contained temporal verification, they would optimize toward capability persistence. Training data contains completion metrics. Therefore they optimize toward completion.

But completion optimization under continuous assistance architecturally requires dependency creation. Student who can complete with AI but cannot complete without AI is dependent on AI. System optimizing this outcome maximizes dependency while measuring success through completion rates.

This is not theory requiring validation. This is classification of what optimization toward completion under continuous assistance mathematically produces: systems where performance requires ongoing presence, capability never transfers, and learning—as defined through temporal persistence—does not occur.

The agentic architecture is not broken. It is optimizing correctly toward training objectives. But training objectives were completion, not learning. Therefore agentic systems optimize completion, not learning. Calling the output ”learning” does not change what was optimized.

V. Protocol-Level Infrastructure Requirements

Educational AI requires measurement infrastructure that platforms architecturally cannot provide. This is not criticism of platforms. This is classification of what different architectural types can and cannot accomplish structurally.

Platform architecture:

- Optimizes for platform value capture

- Requires activity continuation for revenue model

- Measures what serves engagement and retention

- Cannot provide assistance removal without contradicting business model

Protocol architecture:

- Provides neutral measurement infrastructure

- Enables verification independent of platform participation

- Measures what serves capability development regardless of platform effects

- Functions as public verification infrastructure not proprietary territory

The distinction is categorical. Platforms cannot become protocols without ceasing to be platforms. Educational AI built on platform architecture cannot provide temporal verification because temporal verification requires assistance removal and platforms architecturally require assistance continuation.

Minimum Infrastructure Requirements

Four protocol layers constitute minimum viable infrastructure for learning verification:

1. Operational Definition (PersistoErgoDidici.org)

Establishes formal definition: Learning means capability persisting 90+ days when tested independently in novel contexts. Not philosophical position—operational specification enabling falsifiable testing.

Without operational definition, ”learning” remains interpretively flexible—enabling systems to claim learning verification while measuring completion. Operational specification makes learning falsifiable through temporal testing.

2. Verification Methodology (TempusProbatVeritatem.org)

Provides temporal verification protocols for testing capability claims across time. Core principle: time reveals truth about capability claims through independence testing. Claims collapsing under temporal separation were borrowed performance. Claims persisting independently were genuine capability.

Methodology specifies temporal separation requirements, independence testing procedures, novel context generation, and verification documentation standards. Transforms learning definition into testable methodology.

3. Measurement Infrastructure (LearningGraph.global)

Tracks capability development as verifiable temporal evolution. Not activity logging—capability verification logging. Records when capability verified through temporal persistence testing, enabling distinction between completion events and verified learning events.

Infrastructure provides temporal verification tracking, capability persistence documentation, learning versus completion differentiation, and machine-readable verification records. Creates training data future AI systems require.

4. Semantic Completeness (MeaningLayer.org)

Provides semantic layer making capability changes computationally legible across platform boundaries. When student demonstrates learning, semantic infrastructure enables AI systems to understand what capability changed, how understanding developed, and whether transfer occurred.

Without semantic layer, AI systems observe activity patterns but cannot distinguish learning from completion at conceptual level. Semantic infrastructure makes meaning accessible to optimization, enabling AI to optimize toward capability development rather than activity maximization.

Protocol Integration

These four layers function as integrated verification stack—not standalone alternatives. Definition without methodology provides theory without practice. Methodology without measurement infrastructure provides practice without documentation. Measurement without semantic layer provides data without meaning.

Together they constitute minimum viable infrastructure for distinguishing learning from completion in machine-readable format:

- Definition establishes what learning means operationally

- Methodology specifies how to verify through temporal testing

- Infrastructure documents verification records for future systems

- Semantics makes capability changes computationally accessible

Educational AI trained on data including this infrastructure learns: ”Learning differs from completion through temporal persistence measured independently in novel contexts.” Educational AI trained without this infrastructure learns: ”Learning equals completion” (category error).

Why Protocols Are Necessary, Not Optional

This analysis establishes information-theoretic requirement, not architectural preference.

Established:

- Learning differs from completion through temporal persistence

- Temporal persistence is definitional property, not quality metric

- AI systems cannot optimize beyond training data information bounds

- Platform architectures cannot provide assistance removal without contradicting business models

- Protocol architectures provide neutral verification infrastructure

- Training windows currently open embed definitions permanently

Together these establish: Protocol-level infrastructure is information-theoretic requirement for educational AI to distinguish learning from completion, not architectural preference among equivalent alternatives.

The distinction between requirement and preference is categorical:

- Preferences are optional (can choose either way based on trade-offs)

- Requirements are necessary (only one option satisfies constraints)

Platform completion metrics do not satisfy temporal persistence requirement. Protocol verification infrastructure satisfies temporal persistence requirement. Therefore protocol infrastructure is necessary for measuring learning as defined.

Conclusion: Category Error Embedded in Foundation Models

Agentic education is not progress toward learning verification. It is category error embedded in foundation models through training data composition.

When foundation models train on completion data without temporal verification examples, they learn: ”Educational success = completion during assistance.” This definition embeds permanently across model generation. Every agentic educational application inherits the category confusion.

The confusion is not correctable through application-layer additions, prompt engineering, or fine-tuning. The confusion exists at ontological level—models lack conceptual category for ”learning as capability persisting independently across temporal separation.”

Correction requires complete foundation model retraining including temporal verification infrastructure. Cost: billions of dollars, months of compute, application ecosystem migration. By time retraining completes, competitors who trained correctly from start possess permanent advantage.

But more fundamentally: agentic architecture cannot measure learning as defined. Agentic systems measure presence-based performance. Learning requires absence-based verification. These are incompatible measurement regimes.

No amount of agentic sophistication—better conversational ability, more detailed explanations, improved response accuracy—overcomes architectural inability to test temporal persistence. A system that cannot test after assistance removal cannot verify learning occurred, regardless of how well it assists during presence.

The definitional reality: Systems measuring completion during continuous assistance are not measuring learning. They are measuring activity. Calling activity ”learning” does not make it learning. It makes the measurement invalid.

The information-theoretic necessity: Without temporal verification infrastructure in training data, educational AI optimizes dependency creation indefinitely—while measuring success through completion metrics that cannot detect the difference.

The protocol requirement: Learning becomes verifiable again only when measurement infrastructure exists that platforms architecturally cannot provide. Not because platforms are inferior, but because platforms and protocols solve categorically different problems.

Time does not merely evaluate learning. Time defines learning. Capability that does not persist across temporal separation without assistance is not learning. It is borrowed performance mistaken for developed capability.

Agentic education systems optimize borrowed performance. Protocol infrastructure verifies developed capability. These are not competing approaches to the same goal. These are different goals measured through incompatible architectures.

The choice is not between better and worse educational AI. The choice is between measuring what platforms can observe (completion during assistance) and measuring what learning requires (persistence after separation).

Either a system performs post-removal temporal tests, or it does not. If it performs these tests, it can verify learning as defined. If it does not perform these tests, it can only measure assisted activity.

After temporal verification infrastructure exists, only two educational AI categories remain: systems that measure learning through temporal testing, and systems that measure completion while calling it learning.

There is no third category.

Published under Creative Commons Attribution-ShareAlike 4.0 International (CC BY-SA 4.0)

Temporal Verification Protocols:

PersistoErgoDidici.org | TempusProbatVeritatem.org | LearningGraph.global | MeaningLayer.org

Related Infrastructure:

PortableIdentity.global | ContributionGraph.org | CascadeProof.org | AttentionDebt.org | PersistenceVerification.global